ACM Posters – Student Research Competition

Adaptive Multidimensional Quadrature on Multi-GPU Systems

The present work introduces a distributed adaptive deterministic quadrature framework for high-dimensional integration on multi-GPU systems, enabling accurate evaluation of integrals arising in applications such as radiative transfer and probabilistic design. The method is formulated as a hierarchical domain decomposition: the integration domain is recursively subdivided and, at each iteration, all subdomains contributing significantly to the global error are refined in parallel. As each GPU progresses independently through the adaptive refinement, workload imbalance naturally arises. To address this, a decentralised redistribution scheme based on a cyclic round-robin policy is employed. This strategy dynamically rebalances the workload across devices through non-blocking, CUDA-aware MPI communication that overlaps with computation. The proposed approach preserves the strict error guarantees of deterministic quadrature while enabling scalable execution on modern HPC platforms. Extensive benchmarks on oscillatory, sharply peaked, and discontinuous test functions demonstrate improved robustness and efficiency compared to a state-of-the-art GPU integration package, particularly at high accuracy and in large dimensions. Using multiple GPUs overcomes single-device memory limitations and enables integration of up to 11 dimensions, while reducing runtimes for strict accuracy targets.

Author(s): Melanie Tonarelli (Università della Svizzera Italiana)

Data Augmentation to Improve the Performance of Deep Learning-based Seismic Inversion

Deep Learning-based Seismic Inversion (DLI) is a promising alternative to Full Waveform Inversion (FWI), but struggles with data scarcity. This work evaluates data augmentation techniques adapted from computer vision to address this bottleneck, and demonstrates that generating synthetic earth models, even those introducing features that aren’t present in the source data, can significantly improve inversion accuracy.

Author(s): Carlos Gomes de Carvalho Junior (Universidade Federal de São Carlos)

Deep Reinforcement Learning for Algorithm Selection in Molecular Dynamics Simulations

Short-range particle simulations are crucial in physics and chemistry, requiring efficient neighbor search and interaction algorithms. The C++ library AutoPas supports more than 100 algorithms, but no single algorithm is universally optimal. Traditional manual tuning is impractical due to dynamic simulation conditions. To solve this problem, AutoPas implements automated tuning strategies such as full search, Predictive Tuning, and Reinforcement Learning. This work focuses on a Deep Reinforcement Learning (DRL) approach, which dynamically adapts to simulation conditions in two phases: exploration (benchmarking algorithms) and exploitation (predicting the best algorithm via a neural network). For the Meta-parameter tuning, simulation runtime results are cached, avoiding repeating expensive simulations. Evaluations show that DRL outperforms Predictive and Reinforcement Learning strategies, meta-parameter tuning further enhances performance. Therefore, DRL offers a robust and adaptive solution for accelerating short-range particle simulations.

Author(s): Patrick Metscher (Technische Universität München)

Fine-Tuning Large Language Models for HPO-Term Recognition

Large language models (LLMs) offer a promising approach for extracting structured medical information from free-text clinical notes. This work investigates fine-tuning LLMs for Human Phenotype Ontology (HPO) term recognition, a core task in clinical genetics that requires accurate identification and normalization of phenotypic abnormalities. Multiple model architectures, including LLaMA2 and Falcon models at different parameter scales, are fine-tuned on HPC infrastructure utilizing NVIDIA A100 GPUs using annotated clinical data, with and without supplementary HPO ontology knowledge, and several extraction strategies are evaluated. The results show that LLaMA2 7B consistently outperforms larger and alternative models, indicating that increased model size does not necessarily improve performance in data-constrained clinical settings. Incorporating structured HPO knowledge improves training stability and generalization, while a joint “Spans and Terms” extraction approach yields the highest accuracy. The best-performing configuration achieves an F1 score of 0.6942 under exact matching and 0.7375 with fuzzy matching. These findings highlight the importance of model choice and extraction design for reliable phenotype normalization from clinical text.

Author(s): Srinithi Krishnamoorthy (Cornell University)

Hypergraph Partitioning for Sparse Matrix Reordering

Fill-in during sparse matrix factorization remains a critical bottleneck in scientific computing. We present an efficient hypergraph partitioning approach for sparse matrix reordering based on the Clique-Node Hypergraph (CNH) representation, building on prior work by Çatalyurek et al. and Selvitopi et al. Our method transforms the sparsity pattern through an edge-clique cover, creating a hypergraph where cliques become nodes and original vertices become nets. Using a hypergraph partitioner, we generate a symmetric diagonal block form with a separator, then apply established ordering methods to each block. Across a benchmark suite of SuiteSparse matrices, our approach achieves fill-in reductions competitive with METIS, often outperforming it. This work demonstrates that hypergraph partitioning is a practical alternative for fill-in minimization.

Author(s): Ritvik Ranjan (ETH Zurich)

Improving Deep Learning Based Seismic Inversion with Online Augmentation

This study investigates the application of data augmentation techniques to optimize Deep Learning-based Seismic Inversion (DLI), aiming to overcome the scarcity of labeled datasets in the industry. Using the OpenFWI benchmark, the study evaluates four incremental strategies: horizontal flipping, synthetic data generation via diffusion models, an innovative online augmentation loop, and a hybrid DLI-FWI approach. The online augmentation, which generates physically consistent input-output pairs in real-time through forward modeling, proved more efficient and effective than diffusion-based methods, significantly reducing the Mean Absolute Error (MAE). The final methodology, which integrates network predictions with traditional Full-Waveform Inversion (FWI) refinement for complex geological cases, secured 12th place globally in the 2025 Geophysical Waveform Inversion competition.

Author(s): Lucas Souza (Federal University of São Carlos)

Matrix-Free vs. Matrix-Based Finite Element Solvers for 3D Advection–Diffusion–Reaction Equations

High-order finite element methods are attractive for three-dimensional advection–diffusion–reaction (ADR) problems, but their efficiency on distributed-memory systems is often limited by memory traffic and communication in large linear solves. This work compares matrix-based (MAT) and matrix-free (MF) finite element solvers for a 3D ADR model on CPU-based HPC systems using deal.II. Both approaches use the same high-order Q3 discretization, mesh partitioning, and pure-MPI execution, allowing performance differences to be attributed solely to operator realization. In the matrix-based approach, a global sparse matrix is assembled and applied via sparse matrix–vector products, while the matrix-free approach applies the operator on the fly using cell-wise evaluations without forming the matrix. For symmetric diffusion–reaction problems solved with conjugate gradients, the matrix-free method achieves lower time-to-solution due to reduced memory traffic. For nonsymmetric ADR problems solved with restarted GMRES, global reductions limit strong scaling for both approaches, but matrix-free operators remain more efficient. On the largest steady test case with 5.9 million degrees of freedom, matrix-free methods reduce aggregate memory usage by up to 4.75×. A time-dependent implicit Crank–Nicolson test further confirms that matrix-free methods lower both memory consumption and per-iteration solve time when linear systems must be solved at every time step.

Author(s): Zhaohui Song (Politecnico di Milano)

Medulla: Cluster- and Application-level I/O Performance Diagnosis with LLMs

Modern High-Performance Computing (HPC) I/O systems offer immense bandwidth but are notoriously difficult to utilize effectively. Identifying critical bottlenecks is a challenge that persists for both domain scientists and cluster administrators. Existing analysis tools can identify common bottlenecks but often lack interpretability, process only single logs at a time, and fail to capture patterns across multiple runs. Inspired by the semantic reasoning capabilities of Large Language Models (LLMs), we introduce Medulla, a novel diagnosis framework. Medulla provides two interfaces: an interactive CLI for natural language querying and an automated report generator for batch analysis. We evaluate Medulla on the TraceBench diagnosis benchmark, demonstrating that it outperforms state-of-the-art tools like Drishti and IOAgent , achieving an F1-score of 0.80 compared to 0.63 and 0.68 respectively. Furthermore, using production logs from the ALCF Polaris supercomputer, we show how Medulla identifies critical user-level anomalies, such as massive redundant read patterns, which traditional tools overlook

Author(s): Anish Sathyanarayanan (BITS Pilani K K Birla Goa Campus)

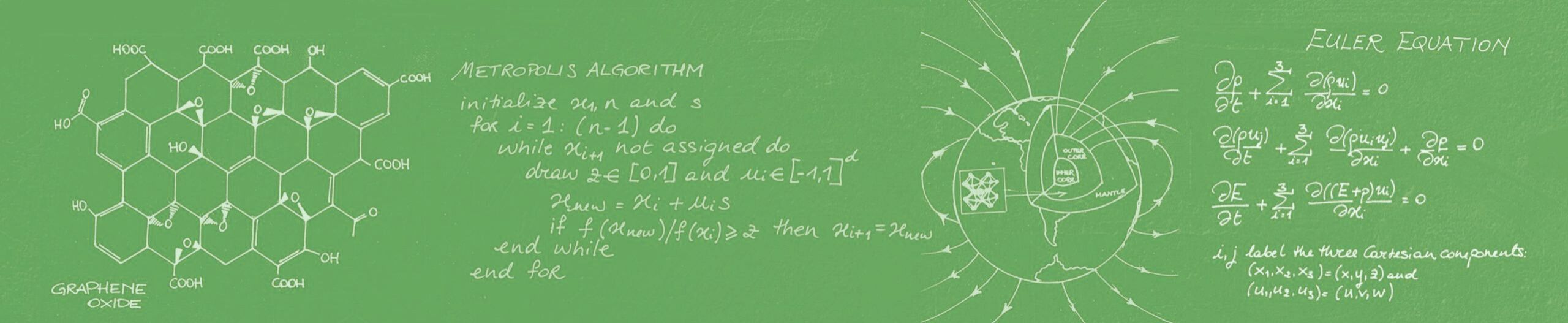

Mixed Precision Acceleration of Light Matter Dynamics: Enabling HPC Co-design for Quantum Material Discovery

Light-matter dynamics in topological quantum materials holds the promise for a sustainable society with ubiquitous and power-hungry artificial intelligence (AI) by enabling ultralow-power (attojoule) and ultrafast (petahertz) computing and sensing devices. A challenge is simulating multiple field and particle equations for light, electrons, and atoms over vast spatiotemporal scales. In the meantime, high-performance computing is at a historic crossroads, where traditional modeling and simulation applications may not survive the increasingly heterogeneous and low-precision focus of hardware architecture, driven by the AI-dominant market. This work resolves this performance-accuracy dichotomy, thereby suggesting exciting new algorithm-hardware co-design pathways for a wide variety of multiscale/multiphysics problems in the post-exascale era.

Author(s): Taufeq Mohammed Razakh (University of Southern California)

Mosaic: Automatic Categorization of I/O Patterns from Scientific Applications

While newly deployed High-Performance Computing (HPC) systems embeds more compute capabilities than the generations before, parallel file systems (PFS) are struggling to keep up with this same trend, increasing the gap between computing power and I/O performance. If new paradigms and technologies are implemented to provide faster storage accesses, like tier-based systems and local storage buffers, it is essential to understand how the I/O behavior of applications to take advantage of these resources. Such knowledge facilitates the design of efficient I/O optimizations, and enables to better understand the loads experienced by the PFS. In this context, we developed MOSAIC, a Python library that processes I/O traces collected at machine scale and classify them based on I/O patterns. MOSAIC extracts patterns through three pillars: I/O temporality, I/O periodicity, and metadata requests. This allows a high-level understanding of how and when applications need to access data and the evolution of those patterns across time and machines. We validate MOSAIC on datasets from two top-tier supercomputers, unveiling a clear correlation between large data writes and periodicity. We also explore pattern variations across multiple runs of the same application or launched by the same user.

Author(s): Théo Jolivel (INRIA, Universite de Rennes)

net_prof: Exascale Network Interface-Level Profiling

Exascale applications depend on low-latency, high-throughput interconnects, yet network-interface telemetry is rarely practical for routine performance investigation. On the Aurora exascale system, each compute node’s eight HPE Cassini NICs expose more than 15,000 counters per node, but the values are poorly organized and hard to associate with specific operations. We present net_prof, a lightweight node-local profiler that snapshots NIC counters before and after targeted events, computes deltas, and transforms raw counters into structured, comparable measurements. net_prof groups counters into high-level classes, ranks signals by delta magnitude, and reports results per NIC through machine-readable JSON and summarized HTML pages. We evaluate net_prof on repeated point-to-point microbenchmarks (ping) and show that the snapshot-and-delta workflow produces consistent delta signatures across runs and across NICs on healthy nodes. This summarized telemetry exposes baselines that help separate always-on background activity from workload-driven changes and highlight localized anomalies when a single NIC deviates from the expected pattern. Overall, net_prof lowers the barrier to using Cassini telemetry and enables event-oriented, per-NIC diagnosis for exascale network performance and troubleshooting. The approach is non-intrusive, and can be integrated into existing test workflows to build reference signatures for tuning, regression testing, and guide decision making.

Author(s): Anthony Cardia (Elmhurst University)

Semantic-Aware Implicit Neural Compression for Physics Simulations

Machine learning surrogates and data-driven scientific discovery require efficient access to simulation data, yet physics simulations generate terabyte-scale datasets. Traditional compression either achieves insufficient ratios or corrupts physics-critical features like conservation laws. Implicit neural representations offer a promising alternative, but adoption has been limited by lengthy training times and dataset-specific fitting. We present SINCPS, leveraging wafer-scale computing to train models in 2 to 3 hours each. Across 22 datasets from The Well benchmark, we achieve 150× to 25,000× compression. Turbulent flows and 3D data remain challenging (13 dB), but half of the datasets exceed 20 dB, enabling integration of large simulation archives into discovery workflows.

Author(s): Jessica Ezemba (Carnegie Mellon University)

Solver-Integrated Lossy and Lossless Compression for Scalable Flow Simulations

Large-scale computational fluid dynamics (CFD) simulations on modern GPU-accelerated supercomputers generate terabytes of data per run, making checkpointing, storage, and post-hoc analysis increasingly I/O-bound. This bottleneck limits data retention and hinders downstream workflows such as visualization and data-driven modeling. In this work, we integrate both lossless and error-bounded lossy compression directly into the GPU-enabled spectral element solver nekRS, enabling scalable, solver-integrated data reduction with minimal communication overhead. Our approach adopts an embarrassingly parallel design in which each MPI rank independently compresses its local solution fields during checkpointing and writes compressed buffers using MPI-IO. We evaluate lossless compression using Blosc2 for restart files and lossy compression using SZ3 for analysis-oriented outputs. Results from a large-scale jet-in-crossflow simulation demonstrate that lossy compression achieves compression ratios exceeding 100x while preserving key flow structures and vortex topology, and reduces I/O time by up to 8x compared to uncompressed output. Lossless compression provides bitwise reproducibility with high throughput for reliable restarts. These results show that solver-integrated compression can significantly alleviate I/O bottlenecks in large-scale CFD and enable more efficient data-centric workflows on emerging HPC systems.

Author(s): Viral Sudip Shah (University of Illinois Urbana-Champaign)

Stability and Accuracy of the r²SCAN Functional for Group-IV Elemental Solids in a Cost-Aware Workflow Perspective

Density-functional theory (DFT) is a central tool in computational materials science, and its predictive power depends critically on the choice of exchange-correlation functional. Here we benchmark the meta-GGA r²SCAN in a study of the group-IV elemental solids C, Si, Ge, and Sn, focusing on stability, accuracy, and computational cost in lattice-dynamics calculations. Relative to SCAN, r²SCAN is markedly more numerically robust while delivering comparable accuracy for elastic and vibrational properties, at a cost well below hybrid-level HSE. A key limitation emerges for phase stability: for the α ↔ β transition in Ge and Sn, r²SCAN yields less reliable energy and transition-pressure predictions, motivating targeted validation for phase-ranking tasks.

Author(s): Adonis Haxhijaj (EPFL, ETH Zurich)